Collaborative AI Development Ethics: A Necessity for Responsible AI Growth

Introduction

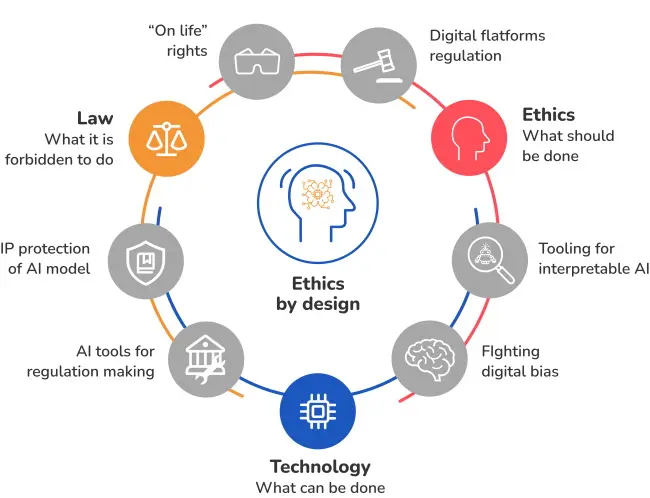

The rapid advancements in artificial intelligence (AI) have led to a plethora of concerns and discussions regarding its ethics. As AI becomes increasingly integrated into our daily lives, it is essential to recognize the value of developing AI systems that are truly beneficial and aligned with societal values. Collaborative AI development ethics is, therefore, a critical approach that focuses on creating a shared understanding and effort towards responsible AI growth.

The growth of AI has precipitated a substantial redefinition of the parameters of technology's role in our lives. It challenges our understanding of ethics in several fundamental ways, fostering a need for innovation to augment and enhance the human experience. For instance, the concept of AI-generated content raises questions about ownership, intellectual property rights, and the responsibility of developers.

Why is Collaborative AI Development Ethics Crucial?

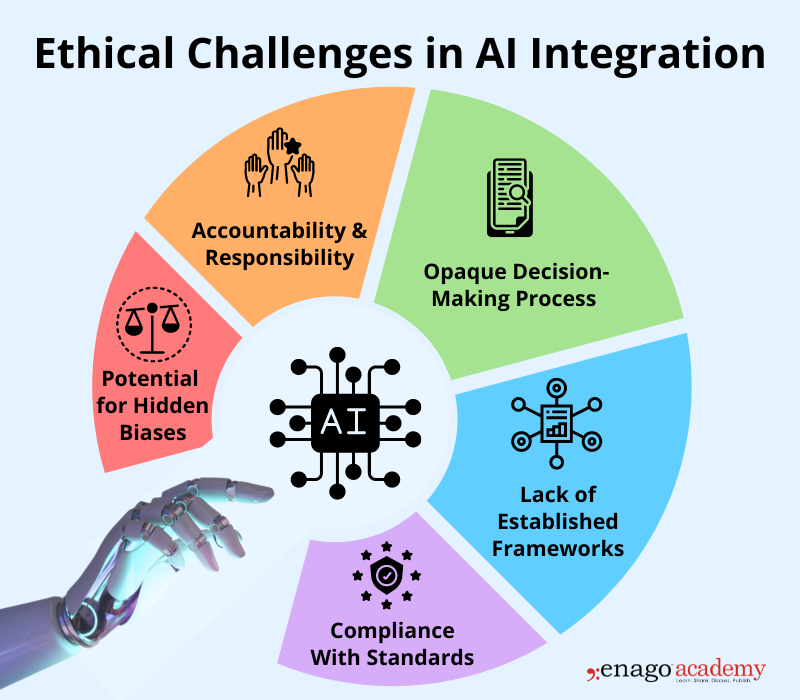

- Ensures Transparency and Accountability**: A collaborative approach to AI development involves a multidisciplinary team that ensures transparency at various stages of development, fostering trust and accountability within the organization.

- Addressing Algorithmic Bias**: Through the cooperative effort of experts from different fields, developers can effectively address algorithmic bias, a persistent concern in AI systems, to make them fair and transparent.

- Protection of Human Rights**:

- Data Privacy**: Collaborative AI development can ensure that data privacy is safeguarded by integrating adequate security measures from the outset.

- Control and Consent**: Foster collaboration between developers and stakeholders to protect human rights and maintain control and consent of data usage.

- Empowering Global Collaboration**: Developing AI in a collaborative setting fosters a global effort, encouraging diverse perspectives, and opens up new avenues for research and problem-solving.